Spider SEO

© 2003-2015 by Denis Sureau

https://www.scriptol.com

Free open source software under the GNU GPL licence

SpiderSEO is a script with a graphical user interface, that

generates automatically meta tags from the content of web pages of an Internet

site. Keywords and description are taken from the content of the page. Other

metas are generated optionally. The script works on a local image of the

website and not online but may be adapted.

Introduction

The cleaner is your code, the more the program has chance to work well. For an example of what I consider a clean code, and a garbaged one, look at lines below:<head> <meta name="keywords" content="order, clarity"> </head> <head > < meta nick = "keyword" content = confusion disorder > < /head>

In the case your code is so garbaged, the resulting code produced by SpiderSEO

may not be what you expect.

The SpiderSEO script is intended to work on a local image of your site (if

your computer is your own server, the local image is the site itself).

Before to use SpiderSEO, take care to make a copy of the whole directory that

holds files of the site.

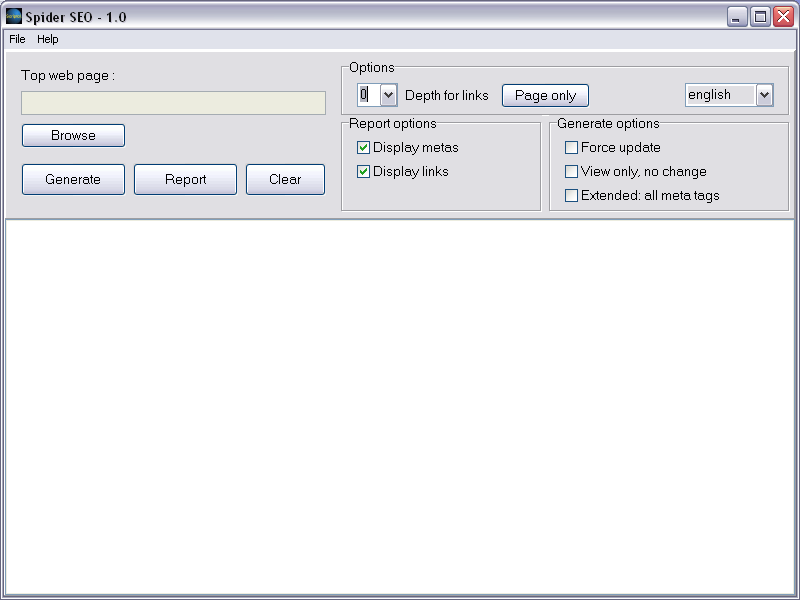

The screen

The large text box displays reports and changes. Buttons and other widgets are detailed below.

Generate and report from the graphical user interface

In the first field, type the full path of the main page of your site, for example:

c:\site\index.htmlThen click on the "Generate" button to start the generating process.

Changes are displayed in the large text area.

To report the actual meta tags or links in your pages, click on the "Report" button.

The file menu

- The "browse" command allows to locate and select a page to parse.

- The "create list" command opens a dialog for creating a list of

pages to process. See below.

- Save: if you want to save the currently displayed results into a file.

- Exit: closes the program.

Creating a list

The graphical interface allows to create a list of links into a file, and

this file may be used as a main page to designate which pages to process.

The add button adds a page to the list.

The suppress button removes a page from the list.

The order of processing may be changed with the up and down

buttons.

Once the list is created, it is saved with the save button.

You can reload a list with the load button, and add more links.

The new button clears the list.

Type return to close the windows.

Once the list is created into a file, the name of this file should be typed

in the top textfield as main page to process and the depth must be set to

1 at least.

The help menu

- Manual: displays a short help.

- About the program...

Options in the graphical user interface

- Depth of links

This is the level of recursion for links found in pages.

If the value is 0, links are ignored.

If it is 1, links of the main page are parsed, but their links are ignored.

And so one... - Dictionary

Select the dictionary for foreign languages. - Force update

Replace the meta tag even if already created and filled. Not recommended, as manual creation is usually better than automatical one. - Display only

Create or update meta and display them, but pages are not really changed. - Extended metas

Default metas are "keywords", "description" and "robots".

This option add "created", "revisit","author". - Display metas

Display the metas. - Display links

Display links found in the page.

Generate options

Report options

Using the programs at command line

The graphical user interface actually calls programs you can use directly.

If your site is stored in the path c:\site, if the main page is index.html (this may be index.php, etc...), just type:

spider c:\site\index.html ...to generate the meta tags. metarep c:\site\index.html ...to report metas and links.

Options of command line tools

RecursionYou can limit the level of recursion with this option:

-r followed by the depth of recursion, 5 for example (default is 0).

spider -r5 c:\site\index.html

Force

-f replacing already existing meta tags.

The algorithm of generation or replacement is given at head of the source

spider.sol.

Test first

-v allows to view the results without changes in the files.

Display

-q no display.

Selecting pages to update with makelist

Makelist is a script that automatically builds a list of web pages inside

a directory. Once the list is built, you may edit it to choose the pages...

- Type:

makelist sitedirectory listname.html- sitedirectory is the location of the image of your site.

- listname.html is the name of the file that will hold the list of pages. - Edit the list and remove the files you want not to change.

- Use this generated file rather than the main page of the site.

The command is:spider listname.htmlor if you don't want the links parsed recursively beyond the pages in the list:spider -r1 listname.htmlThe -r1 flag gives a depth of 1 for recursion. The list itself is the depth 0 of recursion.

Foreign languages

To use SpiderSEO with another human language, you have to replace the small.enlist of useless words by the equivalent for this language.

A small.xx file may be easily created with the help of dictmake, a set of scripts available here.

Download

- Download Spider SEO.

Resources

- The Scriptol compiler. To compile the scripts to PHP or C++.

- Dictionary Maker. Scripts to help in building dictionaries.