The Year 2009 of the Web

In 2008, Google improved the search engine user experience by creating a very fine control of duplicate content which led to the end of many directories and sites filled with RSS feeds.

In 2009 the main engines solve the problem of duplicate content on a site by creating a canonical.

In 2009 it is expected that the development of services offered by Google will continu to grow. This is already the case of Earth, which allows to visit the ocean and Maps to locate mobile phones.

The search engine should also be aware of changes to provide results more relevant and webmasters will have to revise their methods of optimization.

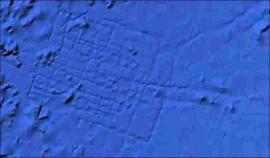

Did Google Earth find Atlantis?

The latest version of Earth that lets you explore the ocean floor would have provided an astonishing discovery.

A graduate of the British Aerospace was able to observe the traces of an engulfed city off the Canary islands (31 15'15 .53 N, 24 15'30 .53 W) that is discernible on the image on the right.

Atlantis experts said that the coordinates correspond to one of the possible localization of the city which was described in the books of the ancient Greek philosopher Plato.

But Google gives an entirely different explanation:

"In this case, however, what users are seeing is an artifact of the data collection process.

Bathymetric (sea floor or field) data is often collected from boats using sonar to take measurements of the sea floor.

The lines reflect the path of the boat as it gathers the data.

The fact that there are blank spots between each of these lines is a sign of how little we really know about the world's oceans. "

Lovers of myths and legends will be disappointed. However, Earth has led to other amazing discoveries, like a primeval forest in Mozambique with unknown animals, and has not finished to surprise us.

Encarta is over

Which caused the death of the Encarta encyclopedia on CD-ROM whose Microsoft has sold millions of copies? Wikipedia? No, it's the Google search engine!

We must face the facts at Microsoft: No more information is searched as before, by opening an encyclopedia, now it is searched on the web and image search allows to complete articles with unlimited pictures.

Beside the search engine, tools like Maps and Earth (or Virtual Earth for Microsoft) provide a source of geographical knowledge against which static documents seem quite miserable.

Encarta was launched in 1993 at the same price as other encyclopedias on CD, for $ 395. It was a multimedia atlas, holding 11 000 photos and digitized sound.

It was in competition with Britanica, and leader in the area was Compton's Interactive Encyclopedia. But Encarta fails and was able to capture only 3% of the market.

Worse, Compton's decides to lower the price to $ 129. Microsoft is obliged to follow and Encarta is downpriced to $ 99. Sales increased and then reached a million copies in 1994.

A free limited version was introduced in 2000 while the price continues to decline, falling to $ 23 in 2009.

Microsoft has decided to ceases to sell the CDs this year and the Encarta site will also be closed.

Geocities now closed

A pillar of the Web site has been collapsing under the effect of economic crisis, combined with negotiations to conclude an agreement between Yahoo and Microsoft. Yahoo announced the end of registrations and final closure of the free hosting service late in 2009.The site displayed the following message:

Sorry, new Geocities accounts are no longer available.

Geocities, founded in 1994 as a social site, was bought by Yahoo in 1999 for 4.7 billions dollars! But the site has declined from 15 million visitors in March 2008 to 11 million in March 2009.

Where to be hosted for free?

The end of geocities reflects a change of epoch, but that does not leave webmaster without resources. Bloggers have many offers of free hosting such as Wordpress.com, Blogger.com. And there are other forms of free hosting listed on our comparison of hosts.

Digg, statistics and complots

The success of Digg, site of bookmark and news, which receives 10,000 submissions a day and has 1 million visitors, generated many imitators that have experienced some success.

But spammers who join together to get their articles in home, caused the disinterest of net surfers and the decline of most digg-likes, and some of them, popular a few months ago, now have a confidential traffic.

And according to a study based on a statistical tool that analyzes the bids and the source of articles on Digg, it seems that the giant itself seems indirectly victim of spammers, because wanting to fight against them and select the sources, the algorithm resulted in the following result: 46% of the articles that go to the Home come from only 50 sites!

The list is given in the study, those sites are listed by the algorithm in a white list, which does not necessarily mean that their articles are better than others.

A double plot has been revealed. On the one hand, a group of "liberals", ie whose who want to change everything except what suits them, named JournoList working together to promote the articles of the group members and bury submissions of competing sites.

Facing them operate "conservative," ie those who do not want to change anything unless it bothers them, have formed a cartel in a band called Digg patriots, who work together to do the same for conservative ideas. They bury the article from liberals and promote to Home page sites of club members.

The End of Domain Tasting

In this wild jungle that is the Web, good things happen from time to time anyway. Domain tasting is a practice of spam that takes advantage of the period of 5 days (Add grace period) given to registrars to renounce to the acquisition of a domain name.

In this wild jungle that is the Web, good things happen from time to time anyway. Domain tasting is a practice of spam that takes advantage of the period of 5 days (Add grace period) given to registrars to renounce to the acquisition of a domain name.

The facility designed to avoid charges in case of errors, was hijacked by profiteers who could at no cost register domain name, lead them on websites showing ads and leave them after 5 days, and again to the infinity, without having to buy anything. An operation that is profitable when it is done en masse.

For registries, they were unnecessary management costs and this applies to quantities of operations as seen in the statistics.

So for one year, ICANN decided to take $ 0.20 for each termination above a certain percentage. Then in July 2009, fees were set at 6.75 dollars. Result: total disappearance of domain tasting ...

- 17 668 750 cancellations in June 2008.

- 2 785 605 in July 2008.

- 58 218 in July 2009.

The remaining number corresponds to corrections of errors from registrars. We dream of a similar measure about spam in emails. Yahoo has launched in this for this purpose the Centmail project: a tax of 1 cent would be paid for every email sent.

Kumo, a new search engine

Kumo was the name of a new search engine that Microsoft was expected to launch in 2009. Actually, the name is Bing. Screenshots have already filtered on the Web, although the engine was not yet active.

The layout of the search categories are closer to the new Google interface that appears when you click on "Show option.

An internal memo was made public:

From: Satya Nadella

Sent: Monday, March 02, 2009 4:18 PMTo: Microsoft - All Employees (QBDG)

Subject: Announcement: Internal Search Test Experience

The Search team needs you. We've been working hard to improve our search service and want to share the progress we are making with you. We are launching a new program called kumo.com test for employees to try and provide feedback. Kumo.com exists only inside the corporate network, and in order to get enough feedback we will be redirecting traffic over live.com internal to the test site in the coming days. Kumo is the codename we have chosen for the internal test.

Microsoft also appears interested in knowledge engines such as Wolfram Alpha, since the firm had acquired Powerset, which offers similar technology to search on Wikipedia.

Oracle to buy Sun: Implications

The acquisition of Sun (Java, MySQL, Solaris, Open Office) by Oracle (database) makes rather negative reactions on forums.

What are the implications for users and webmasters of this acquisition?

- Solaris

The Solaris OS is the most often used to run an Oracle database. Becoming possessor of this open source system, Oracle has now less reason to get involved in Linux, so this is a bad news for Linux. - Java

Java is open source, and a GNU version exists for the library functions.

The acquisition should not change much for the language, but NetBeans, the excellent development tool competes with Oracle's JDevelopper which is also member of the Eclipse Foundation. So bad news for NetBeans. - MySQL

The MySQL database competes with the light version of Oracle, Oracle Express. It is not in the interest of the new owner to develop it.

But even if Sun has the brand name, we do not know what it has effectively with MySQL, because in fact the database is open-source and there are several versions maintained by independent groups of developers, sometimes more sophisticated than the version of Sun.

MySQL is also in competition with another free software, PostGreSQL that is better. - Open Office

By buying Sun, Oracle becomes the owner of Star Office, the commercial version, rather than of Open Office, the free open source version. (Actually the free version was forked and become LibreOffice).

For webmasters and many actors in free software, this announce is a black day and they would have preferred an acquisition by Google. The merge creates a direct competitor to IBM which has had made a bid also, estimated less interesting.

PageRank Algorithm Used for the Ecosystem

Researchers at the University of Chicago had the idea of adapting the PageRank algorithm to the interactions in nature to predict failures in the ecosystem.

Nature, if we include the fauna and flora, is a large web where each eats other, so more or less depend heavily on the existence of another species of animal or plant.

The dependence of a species, for example Panda to bamboo is as links from the latter to the former. Food is the link between species. Pandas are very popular with bamboos as a site is popular among other sites and get lots of links from them.

Suppress the popular site and all sites receiving links from it lose their ranking and therefore their traffic.

Researchers have needed only minor modifications to the algorithm to adapt it to the ecosystem, but the principle has been reversed.

On the Web, a page is important if many pages point to it. In nature, conversely, a species is important if it points to important species. In our example, bamboo has many links to pandas.

The supply is still very complex. The death of a creature, the researcher notes, brings his body to the compost that nourishes others.

By comparing the PageRank algorithm adapted to the nature, and other computational methods already employed in the field, much more difficult to implement, the researchers found they gave the same results.

PageRank is expected to help save species endangered by a better understanding of the ecosystem and greater predictability.

ReferencesPLoS Computational Biology. Dr. Stefano Allesina and Dr. Mercedes Pascual.

Safari 4 inspired by Chrome

The latest version of Safari is very fast. JavaScript runs 42 times faster than on Internet Explorer 7 and 3,5 times more than on Firefox 3 according to CNET's benchmarks.

But when you look at the new appearance of the Apple's browser, there is obvious similarity with that of Google.

The tabs are on top of each page and include a navigation bar, and the interface is clean and streamlined.

Safari 4

Chrome

The buttons are similar with the exception of the Reload button located in the URL field as on Internet Explorer. The main difference is that the search box in Safari is separated.

The new browser incorporates a panel of the most visited sites like Opera and Chrome did it already. This is not the only improvement.

Safari is clearly intended to be a browser for Web 2.0 applications as IE will become in 2011.

PageRank penalties: One year after

There was already a year or more, sites among the most popular saw their PageRank displayed dropped from 7 to 4 and we thought a provisional sanction from Google.But we must note that 16 months later it is not the case, these penalties are still in place.

They have no real impact on traffic, but are intended to discourage webmasters to use their PR as a bargaining chip for the provision of paid backlinks, or as a base to exchange links.

There were well-known names:

- Forbes.com PR 7 -> PR 5

- Washingtonpost.com PR 7 - > PR 4

- Engadget.com PR 7 - > PR 4

- Autoblog.com PR 6 - > PR 4

One would have thought that SEO professional were protected from such a misadventure. Quite the opposite in fact, this is where the largest proportion of sites were affected:

- Searchenginejournal.com PR 7 -> PR 4

- Seroundtable.com PR 7 - > PR 4

- and other less known names.

A website (kinderstart.com) has sued Google, and was dismissed.

However, we can verify that some of the sites affected have recovered their PR. Some sites have managed to return to a policy of natural links and others not, but since this does not affect traffic and the green bar PR display (and logically transmitted) is totally ignored by the general public, this has no importance in their mind.

See also